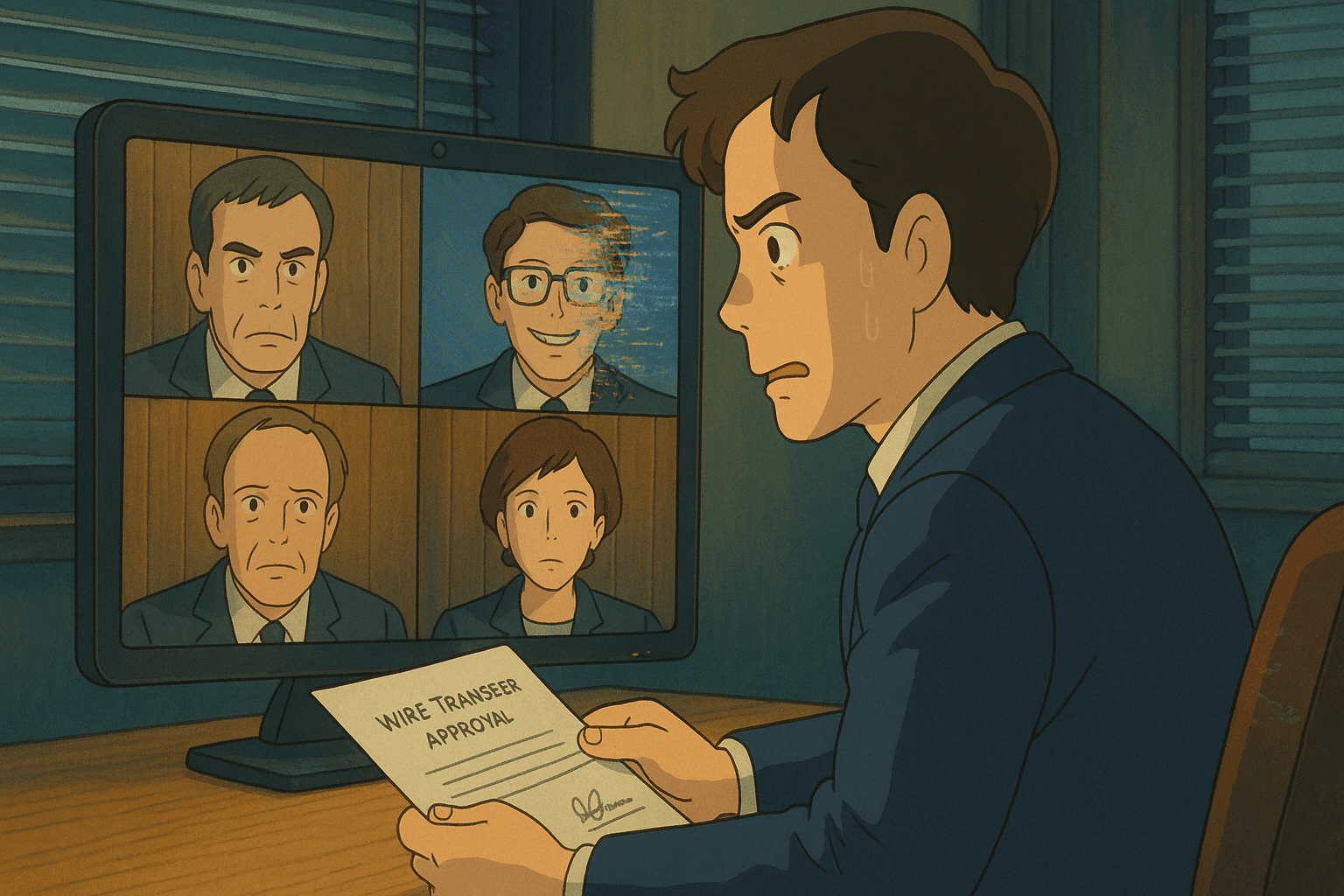

“It sounded exactly like him…”: How UK Engineering Giant Arup Lost £20 Million to a Deepfake Scam

In early 2024, the finance worker in Hong Kong thought nothing unusual about the video call. Their UK-based chief financial officer needed urgent approval for a confidential acquisition, and several familiar colleagues joined to discuss details. After thorough discussion, the employee authorized 15 transfers totalling $25.5 million. Only weeks later did the devastating truth emerge: every person on that call, except the victim, was an AI-generated deepfake. The tricksters had cloned the voices and likenesses of senior executives—including the CFO—and staged a high-stakes video conference. Arup later confirmed: “fake voices and images were used” and no technical systems were breached—they fell for “technology‑enhanced social engineering”.

The incident, they say, is a wake-up call for the corporate world. But this was not the first time deepfake was used for financial fraud. In 2019, scammers used AI to mimic a UK energy company's CEO’s voice, directing a €243,000 transfer. At the 2023 UK Labour Conference, a deepfake audio clip falsely portrayed Keir Starmer abusing his staff. According to a 2025 report, deepfakes of public figures like Martin Wolf, Taylor Swift, Elon Musk have bettered investment scams and false promotions across social media.

What Are Deepfakes?

Deepfakes are synthetic media — images, audio, or video — generated or manipulated using AI technologies like neural networks and GANs.

GAN or Generative Adversarial Networks are a deep learning architecture where two neural networks — the generator and the discriminator — compete against each other: the generator creates new data from a training set, while the discriminator tries to distinguish it from real data, leading to increasingly realistic outputs until the fake data becomes indistinguishable from the original.

The term deepfake comes from “deep learning” and “fake.” While early deepfakes focused on entertainment or celebrity impersonations, in the 2020s they’ve become a tool for fraud, political manipulation, cyberbullying, and more. Deepfake fraud cases surged to 1740% in North America between 2022-23, with financial losses exceeding $200 million in 2025 alone.

Why Deepfakes Are Fooling People Today

Deepfakes aren’t just a technological marvel — they’re a psychological exploit, a corporate threat, and a security blind spot. Here's why they’re so dangerously effective:

Asymmetry Between Generation and Detection Technologies

There’s a dangerous imbalance in the cyber battlefield where deepfake videos are growing at an estimated 900% annually. Detection tools, both manual and automated, struggle to keep pace, often flagging false positives or failing against subtle, high-quality forgeries. This leads to what experts call a "trust gap". Traditional cues—recognizing a face, hearing a familiar voice, noting behavioral patterns—are no longer reliable indicators of authenticity in the age of generative AI.

Psychological Trust in Sight and Sound

Humans are hardwired to trust what they see and hear. When someone appears on video or sounds like a known authority figure — like a CEO or CFO — our cognitive defenses weaken. This innate trust is precisely what fraudsters exploit in high-stakes impersonation scams like the Arup incident.

Accessible AI Tools

Creating deepfakes no longer requires specialized labs or powerful servers. Just 20–30 seconds of someone’s speech is enough to build a convincing synthetic voice and open-source platforms can generate facial mimicry in under an hour with modest computing power. The barrier to entry is so low that even amateur attackers can generate believable impersonations with minimal data. Research shows that state-of-the-art automated detection systems experience 45-50% accuracy drops when confronted with real-world deepfakes compared to laboratory conditions.

Corporate Vulnerabilities

Modern businesses have complex workflows and financial hierarchies, but often lack proper verification protocols. In multi-tiered organizations it is not unusual for an executive to remotely authorize large transactions. Scammers exploit this by impersonating executives over deepfake video or calls, knowing that employees hesitate to challenge higher-ups.

How to Spot a Deepfake

Here are some ways to spot a deepfake.

Audio Deepfakes

Flat tone: AI-generated voices often sound monotone and robotic, lacking emotional depth.

Lack of natural pauses: You may not hear proper breathing sounds before speech starts.

Unnatural pacing: The rhythm can feel too even or artificial, unlike natural human speech.

Background noise anomalies:

Sometimes completely silent, even when noise is expected.

Other times, excessive ambient noise is added unnaturally to make it feel “real.”

Image-Based Deepfakes (AI-Generated Photos)

Zoom in on inconsistencies:

Crooked or melting buildings

Hands with too many or fused fingers

Asymmetrical or malformed facial features

Visual oddities:

Hair may look “painted” or unnaturally clumped.

Shadows might fall in impossible directions or be missing altogether.

Glossy, plastic-like skin tones are common.

Lighting mismatches: Illumination across the subject may feel flat or uneven, unlike how real light behaves.

Video Deepfakes

Eye blinking: Unnatural or infrequent blinking is a major giveaway.

Facial edge artifacts:

Edges of the face or head may appear jagged or pixelated.

Some areas may flicker slightly or misalign with the background.

Lip-sync issues:

Mouth movements may not match the spoken words.

For sounds requiring closed lips (like “B” or “P”), the mouth might remain slightly open.

Tongue and teeth positioning often looks off or blurred.

Speech mismatches: AI usually alters just the mouth; the rest of the face remains static, making expressions feel hollow or disconnected.

Guide to Identifying Deepfakes

These eight questions are intended to help guide people looking through Deepfakes.

Pay attention to the face. High-end Deepfake manipulations are almost always facial transformations.

Pay attention to the cheeks and forehead. Does the skin appear too smooth or too wrinkly? Is the agedness of the skin similar to the agedness of the hair and eyes? Deepfakes may be incongruent on some dimensions.

Pay attention to the eyes and eyebrows. Do shadows appear in places that you would expect? Deepfakes may fail to fully represent the natural physics of a scene.

Pay attention to the glasses. Is there any glare? Is there too much glare? Does the angle of the glare change when the person moves? Once again, Deepfakes may fail to fully represent the natural physics of lighting.

Pay attention to the facial hair or lack thereof. Does this facial hair look real? Deepfakes might add or remove a mustache, sideburns, or beard. But, Deepfakes may fail to make facial hair transformations fully natural.

Pay attention to facial moles. Does the mole look real?

Pay attention to blinking. Does the person blink enough or too much?

Pay attention to the lip movements. Some deepfakes are based on lip syncing. Do the lip movements look natural?

Defense against Deepfakes

For Organizations

Multi-channel authentication: Always verify high-value requests via at least one separate channel.

Segregation of duties: No single person should hold all the authority for large transfers.

Simulation training: Include deepfake scenarios in cybersecurity drills.

AI detection tools: Invest in software that analyzes media for manipulation artifacts—and update as deepfake tech evolves.

For Individuals

Increase awareness: Understand that video/audio can be fabricated.

Verify prominently: If it seems urgent or demand-only came via audio/video, pause and call back.

Be critical online: Don’t trust authoritative videos on social media without reputable corroboration — check and verify via official sites or reports.

The Road Ahead

Deepfake technology is advancing at lightning speed. As platforms struggle to keep pace, the burden falls on users and businesses to think critically and verify. Only with better tech tools, updated policies, and deepfake awareness training can we hope to mitigate this insidious risk.

Artificial voices and faces no longer guarantee authenticity. The Arup £20 million scam is a stark warning: even top firms can be floored by convincingly false audio and video. In 2025 and beyond, trust but verify must be the motto—especially when technology is weaponized against us.

Stay aware. Stay safe.